Table of Contents

Overview

The most basic problem in Numerical Analysis (methods) is the root-finding problem.

For a given function f(x), the process of finding the root involves finding the value of x for which f(x) = 0. If the function equals zero, x is the root of the function.

A root of the equation f(x) = 0 is also called a zero of the function f(x).

The Bisection Method, also called the interval halving method, the binary search method, or the dichotomy method. is based on the Bolzano’s theorem for continuous functions.

Theorem (Bolzano): If a function f(x) is continuous on an interval [a, b] and f(a)·f(b) < 0, then a value c ∈ (a, b) exist for which f(c) = 0.

A function is continuous when small changes of the argument gives also in small changes in the result. In other words, if x changes with small steps f(x) will also change with small steps, it doesn’t give big “jumps” of the result.

f(a)·f(b) < 0 means that f(a) and f(b) have different signs, which means that one of them is above the x-axis and the other one below the x-axis. In this case, if we plot the f(x) function, at some point, it will cross the x-axis. The x value for which the plot is crossing the x-axis is the root of the equation f(x) = 0.

Formula

The Bisection Method looks to find the value c for which the plot of the function f crosses the x-axis. The c value is in this case is an approximation of the root of the function f(x). How close the value of c gets to the real root depends on the value of the tolerance we set for the algorithm.

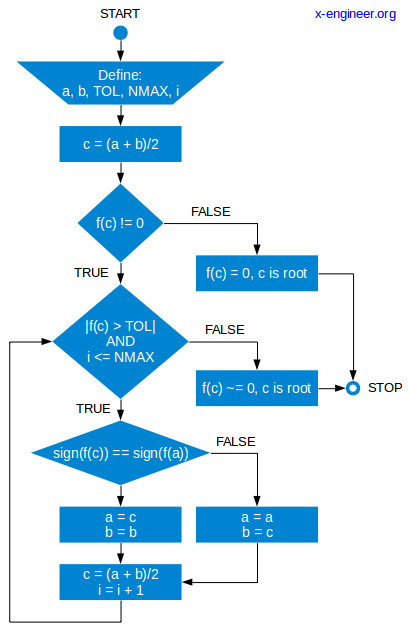

For a given function f(x),the Bisection Method algorithm works as follows:

- two values a and b are chosen for which f(a) > 0 and f(b) < 0 (or the other way around)

- interval halving: a midpoint c is calculated as the arithmetic mean between a and b, c = (a + b) / 2

- the function f is evaluated for the value of c

- if f(c) = 0 means that we found the root of the function, which is c

- if f(c) ≠ 0 we check the sign of f(c):

- if f(c) has the same sign as f(a) we replace a with c and we keep the same value for b

- if f(c) has the same sign as f(b), we replace b with c and we keep the same value for a

- we go back to step 2. and recalculate c with the new value of a or b

The algorithm ends when the values of f(c) is less than a defined tolerance (e.g. 0.001). In this case we say that c is close enough to be the root of the function for which f(c) ~= 0.

In order to avoid too many iterations, we can set a maximum number of iterations (e.g. 1000) and even if we are above the defined tolerance, we keep the last value of c as the root of our function.

Logical diagram

Example

The best way of understanding how the algorithm work is by looking at an example.

For the function f(x) below find the best approximation of the root given the tolerance of TOL = 0.01 and a maximum of NMAX = 1000 iterations.

\[f(x) = 10 – x^2 \]In the table below we are going to calculate the values described in the logic diagram above:

| i | a | b | c | f(a) | f(b) | f(c) |

| 0 | -2 | 5 | 1.5 | 6 | -15 | 7.75 |

| 1 | 1.5 | 5 | 3.25 | 7.75 | -15 | -0.5625 |

| 2 | 1.5 | 3.25 | 2.375 | 7.75 | -0.5625 | 4.359375 |

| 3 | 2.375 | 3.25 | 2.8125 | 4.359375 | -0.5625 | 2.0898438 |

| 4 | 2.8125 | 3.25 | 3.03125 | 2.0898438 | -0.5625 | 0.8115234 |

| 5 | 3.03125 | 3.25 | 3.140625 | 0.8115234 | -0.5625 | 0.1364746 |

| 6 | 3.140625 | 3.25 | 3.1953125 | 0.1364746 | -0.5625 | -0.2100220 |

| 7 | 3.140625 | 3.1953125 | 3.1679688 | 0.1364746 | -0.2100220 | -0.0360260 |

| 8 | 3.140625 | 3.1679688 | 3.1542969 | 0.1364746 | -0.0360260 | 0.0504112 |

| 9 | 3.1542969 | 3.1679688 | 3.1611328 | 0.0504112 | -0.0360260 | 0.0072393 |

At initialization (i = 0) we choose a = -2 and b = 5. After evaluating the function in both points we can see that f(a) is positive while f(b) is negative. This means that between these points, the plot of the function will cross the x-axis in a particular point, which is the root we need to find.

After 9 iterations the value of f(c) is less than our defined tolerance (0.0072393 < 0.01). This means that the value that approximates best the root of the function f is the last value of c = 3.1611328.

We can check our result by solving our quadratic equations in a classic way:

\[ \begin{equation*} \begin{split}10 – x^2 &= 0\\

x^2 &= 10\\

x &= \sqrt{10} = \pm 3.16227766

\end{split} \end{equation*} \]

Below you can see an animation of the f(x) plot for every iteration. Notice how the [a, b] interval (red horizontal line) is halved at each iteration, until it’s converging into a point where the plot is crossing the x-axis (value of x in that point being the root of the function f).

C code

The example calculated in the table is also executed in the C code below. The results are the same as those calculated in the table. Use this C code (copy and past to into a *.c file and execute) to examine the impact of tolerance (e.g 0.0001) on the number of iterations necessary to converge to a solution.

#include<stdio.h>

#include<math.h>

/* SIGN function definition as a macro */

#define sign(x) (x > 0) ? 1 : ((x < 0) ? -1 : 0)

/* functions prototype */

float f (float x);

int f_disp (int i, float a, float b, float c);

int main(void){

float a = -2; /* left point */

float b = 5; /* right point */

float TOL = 1E-2; /* tolerance */

float NMAX = 1000; /* maximum number of iterations */

float c = 0; /* estimated root */

int i = 0; /* index */

c = (a + b)/2; /* calculate the midpoint */

/* Display the table header and initial data */

printf("i\ta\t\tb\t\tc\t\tf(a)\t\tf(b)\t\tf(c)\n");

f_disp(i, a, b, c);

/* Evaluate loop until the result is less than the tolerance

* maximum number of iterations is not yet reached*/

if (f(c) == 0){

/* If the first midpoint gives f(c) = 0, c is the root */

printf("Root is: %f \n", c);}

else{

while ((fabs(f(c)) > TOL) && (i<=NMAX)){

if (sign(f(c)) == sign(f(a))){

/* f(c) has same sign as f(a) */

a = c;}

else{

/* f(c) has same sign as f(b) */

b = c;}

c = (a+b)/2; /* midpoint update */

f_disp(i+1, a, b, c); /* display current data */

i++; /* index increment */}}

/* Display results */

printf("\nRoot is c=%.7f found after %d iterations\n", c, i);

printf("The value of the function f(c) is: %.10f\n", f(c));

return 0;}

/* function definition */

float f (float x){

float y;

y = 10 - x*x;

return y;}

/* display function definition */

int f_disp (int i, float a, float b, float c){

printf("%d\t%.7f\t%.7f\t%.7f\t", i, a, b, c);

printf("%.7f\t%.7f\t%.7f\n", f(a), f(b), f(c));

return 0;} Scilab code

The same algorithm is implemented in a Scilab script. Play with the a, b, TOL and NMAX parameters to understand their impact on the method.

// Function definition

deff('y=f(x)','y=10-x^2;');

// Parameter definition

a = -2; // left point

b = 5; // right point

TOL = 1e-2; // tolerance

NMAX = 1000; // maximum number of iterations

c = (a+b)/2; // midpoint

i = 0; // index

// Loop until function result less than tolerance AND

// maximum number of iternations is not yet reached

if (f(c) == 0)

// If the first midpoint gives f(c) = 0, c is the root

disp(['Root is: ' string(c)]);

else

while ((abs(f(c)) > TOL) & (i <= NMAX))

if (sign(f(c)) == sign(f(a)))

// f(c) has same sign as f(a)

a = c;

else

// f(c) has same sign as f(b)

b = c;

end

c = (a+b)/2; // midpoint update

i = i + 1; // index increment

end

end

clc();

// Display results

format(10); // number of decimal places to display

disp(['Root is: ' string(c) ', found in ' string(i) ' steps.'])

disp(['The value of the function f(c) is: ' string(f(c))]) Tolerance

As you can see, the Bisection Method converges to a solution which depends on the tolerance and number of iteration the algorithm performs. There is a dependency between the tolerance and number of iterations. For a particular tolerance we can calculate how many iterations n we need to perform.

Every iteration the algorithm generates a series of intervals [an, bn] which is half of the previous one:

\[b_n – a_n = \frac{b-a}{2^n} =|c-x| \tag{1}\]The tolerance ε is the absolute value of the difference between the actual root of the function x and the approximation c.

\[\varepsilon=|c-x| \tag{2}\]For the algorithm to converge within an absolute error tolerance (ε), we need to have:

\[|c-x| \le \varepsilon \tag{3}\]By replacing inequality (3) with equation (1), we get:

\[\frac{b-a}{2^n}\le \varepsilon \tag{4}\]Solving inequality (4), we obtain:

\[2^n \ge \frac{b-a}{\varepsilon} \tag{5}\]We apply the logarithm function to both sides of the inequality (5):

\[log(2^n) \ge log\left (\frac{b-a}{\varepsilon} \right ) \tag{6}\]Solving (6), we get:

\[ \begin{equation*} \begin{split}n \cdot log(2) &\ge log\left (\frac{b-a}{\varepsilon} \right )\\

n &\ge \frac{log\left (\frac{b-a}{\varepsilon} \right )}{log(2)}

\end{split} \end{equation*} \]

From the above result we can understand that, using the Bisection Method, in order to calculate an approximate root of a function within tolerance ε, the number n of iterations we need to perform is:

\[ \begin{equation} \begin{split}\bbox[#FFFF9D]{n \ge \frac{log\left (\frac{b-a}{\varepsilon}\right )}{log(2)}}

\end{split} \end{equation} \]

For the example presented in this tutorial our algorithm performed 9 iterations until it found the solution within the imposed tolerance. Let’s apply the above formula to see what is the minimum calculated number of iterations.

\[ \begin{equation*} \begin{split}a &= -2\\

b &= 5\\

\varepsilon &= 0.01\\

n &\ge \frac{log\left (\frac{5+2}{0.01} \right )}{log(2)}\\

n &\ge 9.45

\end{split} \end{equation*} \]

The result shown that we need at least 9 iterations (the integer of 9.45) to converge the solution within the predefined tolerance, which is exactly how many iterations our algorithm performed.

The Bisection Method is a simple root finding method, easy to implement and very robust. The disadvantages of this method is that it’s relatively slow. Because of this, most of the time, the bisection method is used as a starting point to obtain a rough value of the solution which is used later as a starting point for more rapidly converging methods.

Poster

The Bisection Method is summarized in the poster below:

Quiz

Test your knowledge in the Bisection Method by taking the quiz below:

For any questions or observations regarding this tutorial please use the comment form below.

Don’t forget to Like, Share and Subscribe!

dam

Thank you for this!

Hussain Khan

I been asked to do a bisection method for heat transfer and radiation. the function (Ts) = a.Ts^4 + b .ts + c. I need to determine a,b and c

John Lee

There is an issue with the algorithm.

The tolerance should be set for |a-b|, not |f(c)| because if the f is very close to the x axis, say nearly in parallel with x axis, even |f(c)| is very small, but x could be still far far away from the root.

For example if the function f is

f(x)=0.0000001x, the f(c) could be very small and inside the given tolerance, but x could be very far from the root.

lonz

nice in depth

mahmoudali ali

very useful information